Mapping the Fibonacci lattice (aka Golden Spiral, aka Fibonacci Sphere) onto the surface of a sphere is an extremely fast and effective approximate method to evenly distribute points on a sphere. I show how small modifications to the canonical implementation can result in notable improvements for nearest-neighbor measures.

tl;dr: Get a summary of this post via ChatGPT here.

First published: 07 June, 2020

Last modified: 29 Jan, 2024

This is an updated version of my most popular post “Evenly distributing points on a sphere”.

This appeared on the front page of HackerNews in 2021 and also in 2018.

0. The Fibonacci lattice

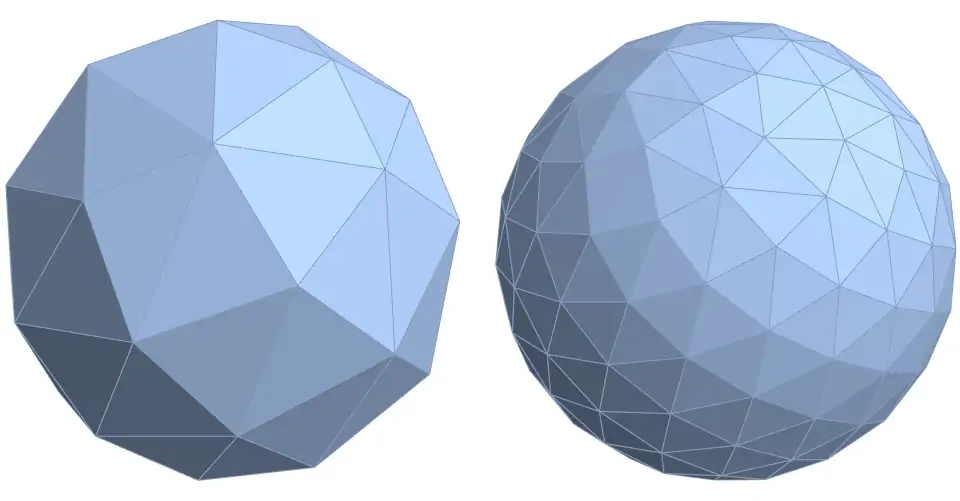

The problem of how to evenly distribute points on a sphere has a very long history. It is of huge signficance in many areas of mathematics, physics, chemistry and computing – including numerical analysis, approximation theory, crystallography, viral morphology, electrostatics, coding theory, and computer graphics, to name just a few.

Unfortunately, except for a small handful of cases, it still has not been exactly solved. Therefore, in nearly all situations, we can merely hope to find near-optimal solutions to this problem.

Of these near-optimal solutions, one of the most common simple methods is one based on the Fibonacci lattice or the Golden Spiral. This method was apparently inspired by phyllotaxis (which describes the seed distribution on the head of a sunflower or a pine cone).

Furthermore, unlike many of the other iterative or randomised methods such a simulated annealing, the Fibonacci spiral is one of the few direct construction methods that works for arbitrary $n$.

The usual modern definition of the Fibonacci lattice (see figure 2, top row), which evenly distributes $n$ points inside a unit square $[0, 1)^2$ is:

$$ t_i = (x_i, y_i) = ( \lfloor \frac{i }{\varphi} \rfloor , \frac{i}{n} ) \quad \textrm{for } \; 0 \leq i < n \tag{1} $$

where

$$\varphi = \frac{1+\sqrt{5}}{2} = \lim_{n \rightarrow \infty} \left( \frac{F_{n+1}}{F_n} \right)$$

and the $\lfloor \cdot \rfloor $ operator denotes the fractional part of the argument.

(This method also works very well for rectangles, too.)

To produce the Fibonacci spiral, aka the Golden Spiral (see figure 2, bottom row), you simply map this point distribution to a unit disk, via the equal-area transformation

$$ (x,y) \rightarrow (\theta, r) : \quad (2\pi x, \sqrt{y}) $$.

Similarly, these point sets can be mapped from the unit square $[0, 1)^2$ to the sphere $S^2$ by the cylindrical equal-area projection (see figure 2):

$$ (x_i,y_i) \rightarrow (\theta_i, \phi_i) : \quad \left(2 \pi x_i, \arccos(1-2y_i) \right) $$

$$ (\theta_i, \phi_i) \rightarrow (x_i, y_i ,z_i) \quad ( \cos \theta_i \sin \phi_i, \sin \theta_i \sin \phi_i, \cos \phi_i ) $$

Note that there are numerous conventions for labelling angles in spherical geometry. The above formula uses the notation that $\theta \in [0,2\pi]$ is the longitude and $\phi \in [0,\pi]$ is the angle from North pole.

That is, the Fibonacci lattice is a simple way to very evenly distribute points in a rectangle, disk or the surface of a sphere.

(See footnote #2, on why the Fibonacci lattice does not wrap on all edges, and why this is a good thing.)

Here’s a basic implementation of this in Python.

from numpy import arange, pi, sin, cos, arccos

n = 50

goldenRatio = (1 + 5**0.5)/2

i = arange(0, n)

theta = 2 *pi * i / goldenRatio

phi = arccos(1 - 2*(i)/n)

x, y, z = cos(theta) * sin(phi), sin(theta) * sin(phi), cos(phi);

Note that althouigh this is the most common formula and implementation ofthe Fibonacci mapping. A very small change can lead to a notable improvement. This small change in listed in the next section called “Canonical Lattice”.

This spiral and spherical mapping both suffer from two distinct but inter-related problems.

The first is that this mapping is area-preserving, not distance-preserving. If you are attempting to optimize distance measures such as those related to nearest-neighbor, then it is not guaranteed that such distance-based constraints and relationships will hold after an area-based projection.

The second, and from a pragmatic point of view possibly the trickiest to resolve, is that the spiral mapping has a singularity point at the centre (and the spherical mapping has a singularity at each pole). In the spherical case, consider two points very close to the pole but 180 degrees different in longitude. On the unit square, (and also on the cylindrical projection) they would correspond to two points that are near the top edge, but still quite distant from each other. However, when mapped to the surface of the sphere they could be joined be a very small great arc going over the north pole. It is this particular issue that makes many of the spiral mappings sub-optimal. This issue does not exist for the fibonacci lattice in $[0,1)^2$.

1. Optimizing minimum nearest-neighbor distance

There are many equally valid criteria that are used to describe how evenly distributed the points on the surface of a sphere are. These include:

-

- Packing and covering

- Convex hulls, Voronoi cells and Delaunay triangles,

- Riesz $s$-energy kernels

- Cubature and Determinants

It is critical to realise that an optimal solution based on one criteria is often not an optimal point distribution for another.

In this post, we only explore two of these measures. This main section looks at the most famous measure: minimum nearest-neighbor distance; while the next section briefly describes average nearest-neighbor distance. (See foonote #3, on exploring how to optimize the Fibonacci lattice for other measures).

The problem of finding the maximum nearest-neighbor is often referred to as “Tammes’s problem” due to the botanist Tammes, who searched for an explanation of the surface structure of pollen grains. It’s also often called Fejes Tóth’s problem. And the configuration corresponding to this optimal nearest neighbor is often called a spherical code.

Platonic Solids

One myth that is important to dispel is the belief that all the Platonic solids are the optimal configurations for their respectively corresponding values of $n$.

Unfortunately, this is not true. This is succintly described on the Wolfram mathworld site:

“For two points, the points should be at opposite ends of a diameter. For four points, they should be placed at the polyhedron vertices of an inscribed regular tetrahedron. There is no unique best solution for five points since the distance cannot be reduced below that for six points. For six points, they should be placed at the polyhedron vertices of an inscribed regular octahedron. For seven points, the best solution is four equilateral spherical triangles with angles of 80 degress. For eight points, the best dispersal is not the polyhedron vertices of the inscribed cube, but of a square antiprism with equal polyhedron edges. The solution for nine points is eight equilateral spherical triangles with angles of $\arccos(\frac{1}{4})$. For 12 points, the solution is an inscribed regular icosahedron.”

Let’s repeat this point, as it is not obvious and not well-known:

Two of the platonic solids, the cube ($n=8$) and the dodecahedron $(n=20)$ are not the optimal point configurations for minimum nearest-neighbor distance. And actually, they are far from it.

So before we explore how to improve the canonical Fibonacci lattice for arbitrary $n$, let’s set up some notation.

If, for each of the $n$ points, we define $\delta_i$ to be distance from point $i$ to its closest neighbor $(0 \leq i < n$). Then Tammes’s problem asks us to maximize the minimum of $d_i$ over all $n$ points. That is,

$$ \delta_{\rm{min}}(n) = \min_{0\leq i < n} \delta_i $$

This value decreases at a rate ~$1/\sqrt{n}$, so it is also useful to define the normalized distance, and also the asymptotic limit of the normalized distance as

$$ \delta^*(n) = \sqrt{n} \delta(n) ,\quad \quad \delta^* = \lim_{n \rightarrow \infty} \delta^*(n) $$

Canonical lattice

The standard implementation (as listed in the code above), divides the unit interval $[0,1)$ into $n$ equal subintervals, and then places a point at the midpoint of each of these intervals (aka the midpoint rule in gausssian quadrature). That is,

$$ t_i (\theta, \phi) = \left( \frac{i}{\varphi}, \frac{i{+0.5}}{n} \right) \quad \textrm{for }\; 0 \leq i < n \tag{1}$$

Despite the seemingly trivial inclusion of an additional 1/2 factor to the common algorithm, this new version of the Fibonacci spiral produces stunningly impressive results.

Here’s a basic implementation of this in Python.

from numpy import arange, pi, sin, cos, arccos

n = 50

goldenRatio = (1 + 5**0.5)/2

i = arange(0, n)

theta = 2 *pi * i / goldenRatio

phi = arccos(1 - 2*(i+0.5)/n)

x, y, z = cos(theta) * sin(phi), sin(theta) * sin(phi), cos(phi);This method produces a consistent value of $\delta^*_n=3.09$ for all $n$.

** interlude **

When mapping these points to the surface of a sphere, one notices that the points are very evenly spread out across the surface. One way, to measure how far apart these points are is to imagine for each of these points, a radial line — originating at the centre of the sphere and ending at each point on a sphere. We can then measure the angle between these rays, simply by taking a dot product of relevant vectors. For example, we can simply consider the first two points, then using the equations above we can calculate both

$$ (x_0,y_0,z_0) \quad \text{and} \quad (x_1,y_1,z_1) $$

and then take the dot product between these, which will give the cosine of the angle between these.

Listed below is a basic table for the radial angle between neighbouring points for various n. We can then construct the points by forming triplets of radial lines, where the angle between each of these neighbouring lines is given below.

(n, angle):

(3,61.7),(4,53.3),(5,47.5),(6,43.2),(7,39.9),(8,37.2),(9,35),(10,33.2),(11,31.6),(12,30.2),(13,29),(14,28),(15,27),(16,26.1),(17,25.3),(18,24.6),(19,23.9),(20,23.2),

(25,20.8),(30,19),(35,17.6),(40,16.5),(45,15.5),(50,14.7),(55,14),(60,13.4),(65,12.9),(70,12.4),(75,12),(80,11.6),(85,11.3),(90,10.9),(95,10.7),(100, 10.4)

Offset Fibonacci Lattice.

A key insight to improving on Equation 1, is to realize that the $\delta_\rm{min}(n)$ always corresponds to the distance between the points $t_0$ and $t_3$, which are located near the poles. Thus, to improve $\delta(n)$ the points near the poles should be positioned farther apart.

To achieve this, we need to move (offset) all the points slightly farther away from the poles. This of course means, that almost all of them become slightly closer together. However, as they are not the bottleneck for optimizing $\delta_\rm{min}(n)$, then in this situation, this is tolerable.

Specifically, we can achieve this by defining a sub-interval of length $\epsilon$ on each end of the interval $(0,1)$ where there are no points, and then we equally space the $n$ points on the remaining interval $(\epsilon, 1{-}\epsilon)$ ensuring that there is a point at each end of this interval.

That is,

$$ t_i(\varepsilon) = \left( \frac{i{+ \varepsilon}}{n{-1}{+2\varepsilon}}, \frac{i}{\varphi} \right) \quad \textrm{for }\; 0 \leq i < n \tag{2}$$

Note that $\varepsilon=\frac{1}{2}$ corresponds to canonical lattice, and $\varepsilon>\frac{1}{2}$ represents an increased gap near the poles.

Through methods such as Monte Carlo, for each $n$ we can empirically find the optimal value of $\varepsilon$ that optimizes packing distance. Let’s denote this optimal value as $\varepsilon^*(n)$.

The bad news is that there does not seem to be a simple closed expression for $\varepsilon^*(n)$. However, the good news is that some very elegant properties can be found.

The first is that the function $\varepsilon^*(n)$ is not a continuous function, but more like a step function, (significantly) increasing only at certain values of $n$. Furthermore, the corresponding values of $\varepsilon(n)$ are nearly always very close to a round number. More specfically, empirical analysis shows that the following table is an excellent description for the best values of $\varepsilon$:

$\begin{array}{|rcccl|r|} \hline

&&&&& \varepsilon(n) \\ \hline

\quad & &n &<&24 & 0.33\\\\

24 &\leq &n &<&177 & 1.33\\\\

177 &\leq &n &< &890 & 3.33 \\\\

890 &\leq &n &<&11,000 & 10 \\\\

11,000 &\leq &n &<&39,000 & 27 \\\\

400,000 &\leq &n &<&600,000 & 75\\\\

600,000 &\leq &n & \quad && 214\\\\ \hline

\end{array}$

A basic implementation of this offset-lattice is shown.

from numpy import arange, pi, sin, cos, arccos

n = 50

if n >= 600000:

epsilon = 214

elif n>= 400000:

epsilon = 75

elif n>= 11000:

epsilon = 27

elif n>= 890:

epsilon = 10

elif n>= 177:

epsilon = 3.33

elif n>= 24:

epsilon = 1.33

else:

epsilon = 0.33

goldenRatio = (1 + 5**0.5)/2

i = arange(0, n)

theta = 2 *pi * i / goldenRatio

phi = arccos(1 - 2*(i+epsilon)/(n-1+2*epsilon))

x, y, z = cos(theta) * sin(phi), sin(theta) * sin(phi), cos(phi);Offsetting the points of the Fibonacci lattice slightly away from the poles (as defined in equation 2) produces a packing $\delta^*_{\rm{min}}$, that is up to 8\% tighter than the canonical Fibonacci lattice.

\begin{array}{|r|rr|r|} \hline

n & \rm{canonical} & \rm{new} & \rm{increase} \\ \hline

10 & 3.09 & 3.13 & 1.006\\

20 & 3.09 & 3.12 & 1.011\\

50 & 3.09 & 3.21 & 1.041\\ \hline

100 & 3.09 & 3.25 & 1.051 \\

200 & 3.09 & 3.27 & 1.057 \\

500 & 3.09 & 3.30 & 1.066 \\ \hline

1,000 & 3.09 & 3.31 & 1.070\\

2,000 & 3.09 & 3.33 & 1.076 \\

5,000 & 3.09 & 3.34 & 1.078 \\ \hline

10,000 & 3.09 & 3.34 & 1.080\\

20,000 & 3.09 & 3.34 & 1.080\\

50,000 & 3.09 & 3.35 & 1.082\\ \hline

\end{array}

(See footnote #4 on additional ways to modify the canonical lattice that increases $\delta_{\rm{min}}$ even further.)

Comparison

We can compare this result with some of the state-of-the-art methods which are typically complex and require recursive and/or dynamic programming are as follows (the higher the better).

\begin{array}{|lr|} \hline

\text{} & d^* \\ \hline

\text{Coulomb} & 3.37 \\

\text{Log Energy} & 3.37\\

\text{Offset Fibonacci Lattice} & \bf{3.35} \\

\text{Zonal Equal Area} & 3.32 \\

\text{Max Determinant} & 3.19 \\

\text{Canonical Fibonacci Lattice} & 3.09\\ \hline

\end{array}

2. Corollary. Preventing large gaps

For large value of $n$, (especially $n>1000$), when optimzing packing distance, the optimal value of $\varepsilon$ is quite large, and the effect of this is a noticeable gap or void at each pole.

A simple technique to avoid this gap, is to place a point at each pole, and then place the remaining $n{-}2$ points as per equation 2 with an revised offset $\varepsilon_2$as per the table below.

Not only does this minimize the gap at each pole, but it also offers a tiny improvement on the offset lattice as defined in equation 2. The size of the improvement is very small – only up to 1%, and typically less than 0.1%.

Therefore, in regards to packing distance it probably is probably more for theoretical interest than for any practical purpose. However, in most cases it is probably worth it to ensure wide applicability for the resulting configuration.

\begin{array}{|rcccl|r|} \hline

&&&&& \varepsilon_2(n) \\ \hline

\quad & &n &<&80 & 2.66\\

80 &\leq &n &<&1000 &3.33\\

1000&\leq &n &< &40000& 10\\

40,000 &\leq &n & \quad && 25\\ \hline

\end{array}

3. Optimizing average nearest-neighbor distance

Although maximizing minimum nearest neigbor is the most studied and is extremly useful from a theoretical perspective, it might not be the most useful one for many engineering and computational applications.

Unfortunately, optimzing for minimum nearest neighbor, produces less than optimal configurations for average nearest neighbor. This is not surprising as the offset pushes all but a small handful of points away from the poles, and thus closer together.

It turns out that for all $n$, there is essentially a single value of $\varepsilon$ that offers the best configuration. Namely, $\varepsilon = 0.36$.

Note that this value is less than 0.5, so the points are actually pulled closer to the poles compared to the canonical implementation!

Although offsetting the points with $\varepsilon= 0.36$ produces suboptimal configurations for packing distance, it produces a optimal configurations for average nearest neighbor measure. This makes it ideal for most engineering and computing applications.

5. Summary

Mapping the Fibonacci lattice (aka Golden Spiral, aka Fibonacci Sphere) onto the surface of a sphere is an extremely fast and effective approximate method to evenly distribute points on a sphere.

However, by offsetting the points of the Fibonacci lattice slightly away from the poles (as defined in equation 2), results in a point onfiguration that is up to 8.3\% tighter than the canonical Fibonacci lattice (in terms of packing distance).

\begin{array}{|r|rr|r|} \hline

n & \rm{canonical} & \rm{new} & \rm{increase} \\ \hline

100 & 3.09 & 3.25 & 1.051\\

200 & 3.09 & 3.27 & 1.05.7\\

500 & 3.09 & 3.30 & 1.066 \\ \hline

1,000 & 3.09 & 3.31 & 1.070\\

2,000 & 3.09 & 3.33 & 1.076\\

5,000 & 3.09 & 3.34 & 1.078 \\ \hline

10,000 & 3.09 & 3.34 & 1.080\\

20,000 & 3.09 & 3.34 & 1.080\\

50,000 & 3.09 & 3.35 & 1.082\\ \hline

\end{array}

For $n>100$, an improvement can be made beyond this, by initially placing a point at each pole, and then placing the remaining $n-2$ points as per equation 2 with an offset as per table in section 4. This not only (very sightly) improves minimal nearest packing, but it also prevents a large gap at each pole.

Footnotes

#1. Technically, a sphere only consists of all points that are equidistant from the centre. This set of all such points forms a surface. Therefore, the phrase ‘the surface of the sphere’ is a tautology. However, in this post, I frequently use this phrase to ensure there is no confusion amongst those readers, regardless of their technical background.

#3. The mod 1 operator in the Fibonacci lattice (see figure 1, top row) indicates that we are really mapping the unit square to a cylinder. It is often considered that this new space is a torus, but careful observation shows that points near the top edge and the bottom edge do not precisely align.

In our case, where we are mapping the lattice to the disc or to the surface of a sphere, this actually works in our favor as it reduces the constraints and therefore allows a higher degree of freedom when finding good point separations. This is one reason why mapping Fibonacci lattice to the surface of the sphere nearly always produces better results than mapping a typical two-dimensional low discrepancy sequence (where all the edges wrap) to the surface of the sphere.

#3. This post explores measures relating to nearest neighbor. To optimize the point configurations such that the volume of the convex hull is maximized, see my previous post, “Evenly distributing points on a sphere”.)

#4. Moving the points farther and farther away from the poles, suggests that there is a threshold where it is constructive to place a point at each of the poles. Details of this were explored in the previous post on this topic “evenly distributing points on a sphere“. However, with these newly found optimal values of $\varepsilon$, this additional complexity only improves $\delta_{\rm{min}}$ by up to 0.2%, so for most applications it is probably not worth implementing.

Key References

- Saff: A Comparison of poopular point confugrations on $S^2$“:

- Evenly_distributed_points_on_sphere

- Saff-Kuijlaars; MathIntel97

- Project Euclid; euclid.em/1067634731

- Vanderhaeghe; Spherical Fibonacci Mapping

- Leopardi; Sphere talk

- Leopardi; Sphere-PhD-Thesis

- mathworld.(wolfram); SphericalCode

- Neil Sloane: Spherical Packings

***

My name is Dr Martin Roberts, and I’m a Principal Data Science consultant, with international experience and expertise working at the intersection of mathematics and computing.

“I transform and modernize organizations through innovative data strategies solutions.”You can contact me through any of these channels.

LinkedIn: https://www.linkedin.com/in/martinroberts/

email: Martin (at) RobertsAnalytics (dot) com

More details about me can be found here.

Other Posts you might like:

5 Replies to “How to evenly distribute points on a sphere more effectively than the canonical Fibonacci Lattice”

Comments are closed.